AI Will Not Replace Product Teams. It Will Expose Weak Ones

Share

Share

Get a quick blog summary with

The narrative today is that AI app builders and product development tools will replace product teams. You see headlines claiming that AI will design, build, and ship products end to end. For early demos and simple tools, this can look true.

You can spin up a basic website, landing page, or prototype in hours using AI-powered builders.

But as we work on real products across websites, mobile apps, and complex digital platforms, the reality on the ground is very different. Building a simple tool is easy. Building a product that scales, performs well, stays secure, and delivers real user value is not.

When it comes to SMEs and enterprise products, AI is not replacing product teams. It is magnifying their weaknesses. Poor product strategy, unclear requirements, weak UX thinking, and fragile engineering foundations become visible much faster when AI is added to the process.

Let us break this down and see why AI is not replacing product teams, but exposing the weak ones.

What “Weak Product Teams” Look Like in Real Life

When a product struggles in the market, the root cause is rarely the tools being used. It is the way teams think, decide, and work together. AI does not create weak teams. It exposes the gaps that already exist.

Based on what we see while building websites, mobile apps, and complex digital products for growing businesses and enterprises, here are some real-world patterns that signal a weak product team.

No clear product strategy or success metrics

Weak teams start building before agreeing on what success looks like. There is no clear product goal, no defined user outcome, and no shared metric to measure impact.

AI then becomes a fast way to ship features without direction. The result is activity without progress. Teams feel busy but the product does not move closer to business goals.

Actionable fix: define one core user problem to solve per release and attach a measurable outcome to it. This could be conversion rate, activation, retention, or task completion time. AI should support execution after direction is set, not replace strategic clarity.

Features shipped without user insight or validation

Weak teams build based on assumptions, internal opinions, or what competitors are doing. AI makes this worse by enabling teams to generate features quickly without talking to users. This leads to polished features that solve the wrong problems.

Over time, products become bloated with low-impact functionality and poor adoption. Actionable fix: put a simple validation loop before shipping.

This can be short user interviews, usability tests, or even internal dogfooding with real scenarios. Use AI to summarize feedback or spot patterns, but ensure real user input shapes what gets built.

Design is treated as UI polish instead of experience design

AI design tools can generate screens quickly, which creates the illusion of progress. But good products are built around user journeys, edge cases, and emotional context. When design is reduced to UI, teams miss friction points like confusing flows, unclear states, and broken handoffs between features.

Actionable fix: define experience goals before screens. Map the user journey, identify drop-offs, and design for outcomes such as faster task completion or reduced errors. Use AI for layout exploration, not for defining how the experience should work.

Engineering teams coding without shared architecture principles

AI tools are exceptional at generating lines of code but poor at system design. Research from GitClear in 2024 and 2025 analyzed over 200 million lines of code and found that code churn, code rewritten within two weeks, has surged, while refactoring has collapsed by 60 percent.

This leads to fragile systems that break under scale.

Actionable fix: set architecture principles and review standards before using AI at scale. Define how services, data, and integrations should work. Treat AI output as a draft that must pass engineering review, not as production-ready code.

Stakeholders unclear on who owns product decisions

Teams with no clear owner for product decisions will be the first to struggle when AI is added to the workflow. No one clearly owns product outcomes, so decisions bounce between founders, managers, designers, and engineers. AI adds more noise by producing multiple options that no one is accountable for choosing. This slows teams down and creates conflicting priorities.

Actionable fix: assign clear product ownership for each initiative. One person should own the problem, the outcome, and the final call. AI can provide inputs and alternatives, but decision accountability must remain human. Clear ownership leads to faster alignment and fewer rework cycles.

How Strong Product Teams Use AI as Leverage

There is no harm in saying that AI helps. It helps with speed, exploration, and reducing repetitive work. It works well for early drafts, idea generation, code scaffolding, and quick analysis. Even in our design and tech workflows, we use AI as leverage.

The difference is how you use it. Strong product teams do not hand over thinking and decisions to AI. They use it to move faster on execution, while keeping ownership of product direction, experience, and quality. Let us look at how teams like Tenet use AI in practice.

AI as a co-pilot, not a decision-maker

Strong teams treat AI like a fast assistant, not a product owner. AI can suggest options, summarize research, or propose approaches, but final decisions stay with the team. This keeps accountability clear and avoids blind acceptance of outputs.

When teams let AI decide priorities or solutions, they lose product clarity. Actionable use: ask AI for multiple options, then evaluate them against user needs, business goals, and technical constraints. Use AI to speed up thinking, not to replace judgment.

Designers use AI for exploration, not final UX decisions

Design teams use AI to explore layouts, content variations, and early concepts. This helps them test ideas faster and avoid starting from a blank page. But final UX decisions are based on user behavior, usability testing, and product context.

AI does not understand real user pain points or edge cases. Actionable use: use AI to generate wireframe ideas or content drafts, then validate flows with real users and refine journeys based on feedback. Keep experience design grounded in research, not in AI output.

Engineers use AI for acceleration, not architecture

Strong engineering teams use AI to speed up routine work like boilerplate code, small utilities, and test cases. They do not rely on AI for system design, data models, or scalability decisions. Architecture choices require deep context of the product, users, and long term roadmap.

Let AI help with repetitive coding tasks, but keep architecture reviews, performance decisions, and security design in human hands. Treat AI output as a draft that must pass engineering standards before shipping.

Product managers use AI to analyze inputs, not define outcomes

Product managers use AI to summarize research, cluster feedback, and analyze trends from large sets of data. This saves time and helps spot patterns faster.

But defining what to build, why it matters, and how success is measured stays a human responsibility. AI does not own the product vision or business goals.

The Productivity Perception Gap

Most tech leaders see AI as a direct productivity boost. The assumption is simple. Add AI to the workflow and teams will move faster. On the surface, it feels true. Code gets generated quickly. Designs appear in seconds. Content and documentation move faster than before. Teams feel productive because output increases.

But the reality is more nuanced. There is a massive gap between feeling fast and being fast. A METR randomized trial in 2025 found that while developers believed they were 20 percent faster using AI, they were actually 19 percent slower on average. The slowdown came from time spent reviewing, fixing, and debugging almost right AI suggestions.

This shows that speed at the point of creation does not equal speed to production. AI can shift effort from building to fixing, which often goes unnoticed in planning and delivery timelines.

This gap becomes more visible in real product teams when AI is used without strong review processes, clear ownership, and quality standards. Output goes up, but rework, regressions, and hidden complexity increase too. Over time, teams mistake activity for progress and velocity for value.

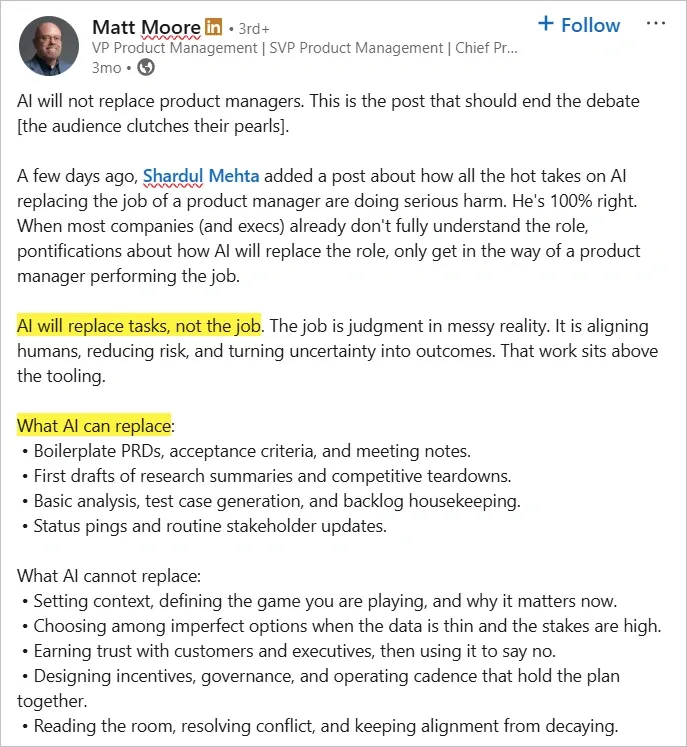

Here’s what a VP of Product Management has to say about AI replacing product management tasks, not jobs:

What the productivity perception gap creates in real teams:

- False confidence in delivery timelines

- Underestimated QA and debugging effort

- More rework after release

- Hidden performance and security issues

- Slower long-term velocity

- Burnout from constant fixes

The Future Belongs to Teams Who Think, Not Just Ship

In the next few years, this gap will only grow. More teams will ship faster with AI, but many of them will also ship more AI slop. Half working features, fragile code, generic UX, and poorly thought out flows will pile up. And when real users arrive, those teams will still need real humans to fix the mess. AI will not remove the need for strong product thinking. It will make the cost of weak thinking more visible.

No matter how tools evolve, we at Tenet will continue to use AI as leverage, not as a shortcut. Our designers, engineers, and product teams use AI to move faster on execution while staying responsible for strategy, experience design, system architecture, and quality.

We work across industries and build complex digital solutions end to end, from websites and mobile apps to large scale platforms and custom products. For us, AI is part of the workflow. Strong product teams are the foundation.

Expertise Delivered Straight to Your Inbox

Expertise Delivered Straight to Your Inbox

Got an idea on your mind?

We’d love to hear about your brand, your visions, current challenges, even if you’re not sure what your next step is.

Let’s talk